When Will It Be Done? Unpacking Estimation Strategies for Product Managers

Estimation. It’s the eight-letter word that haunts every product manager’s dreams. We’ve all been there, haven’t we? Staring down the barrel of a new feature, trying to predict how long it will take to go from concept to code. It’s like trying to guess the final time of a marathon runner who’s never run a mile.

Why is it so hard? Because we’re not just building something new. We’re venturing into uncharted territory. If we knew exactly how long it would take, we’d be retracing our steps, not blazing a new trail. And even if we’ve tread a similar path before, there’s always a chance of stumbling upon a hidden pitfall or a sudden detour.

We’ve been conditioned to believe that the key to successful project management is getting better at estimation. But what if that’s not the answer? What if, instead of trying to predict the unpredictable, we focused on learning from the journey?

Yes, you heard it right. Learning, not estimating.

In this article, we’re going to flip the script on estimation. We’ll explore why traditional estimation is like trying to hit a moving target blindfolded and how a learning approach can lead us to more successful outcomes. We’ll delve into the power of story mapping and how it can illuminate the unknowns in our path.

So, fasten your seatbelts, folks. We’re about to take a detour off the well-trodden path of estimation and venture into the landscape of learning.

The Problem with Traditional Estimation

Estimation in software development is often treated like a science. We gather data, analyze patterns, and make predictions based on past performance. It’s a logical approach, but it’s built on a shaky foundation: the assumption that the future will resemble the past.

Let’s unpack that a bit.

When we estimate a new feature, we look at similar features we’ve built before and use them as a benchmark. We say, ‘Feature X took us two weeks, so Feature Y should take about the same amount of time.’ It’s a reasonable assumption, but it overlooks a crucial factor: every feature is a unique entity with its own set of challenges and complexities.

Let’s come up with an example. Imagine we’re building a new login feature. We’ve done it before, and it took us a week. So, we estimate a week for this one too. But this time, we’re integrating with a new third-party authentication service. We’ve never worked with this service before, and it turns out, their API documentation is outdated. We spend days figuring it out, which pushes our one-sprint estimate into two.

This is the pitfall of traditional estimation. It assumes a level of familiarity and predictability that simply doesn’t exist in software development. Every new feature is a step into the unknown, a unique combination of tasks and challenges. And that’s something no amount of past data can accurately predict.

What about Story Points or Counting Stories

In the world of software development, there are two common methods of estimation: story points and counting stories. Both methods are widely used, and both are pursued with the goal of improving estimation accuracy. But, as we’ll see, both methods have their limitations.

Story points are a measure of effort. A team assigns each user story a point value based on its perceived complexity. The idea is that over time, the team will get better at assigning points, and their estimates will become more accurate. But there’s a catch. Story points are subjective. What one team member considers a ‘5’ might be a ‘3’ to someone else. And even if the team agrees on a point value, there’s no guarantee that a ‘5’ story this sprint will take the same amount of time as a ‘5’ story next sprint.

Counting stories is another approach. Instead of assigning points, teams simply count the number of user stories they complete in a sprint. The assumption is that over time, the team will establish a consistent velocity, and they can use that velocity to predict future performance. But this method also has its flaws. Not all user stories are created equal. Some are straightforward and can be completed quickly, while others are more complex and take longer. Counting stories assumes a level of uniformity that doesn’t exist in reality.

Both of these methods are built on the assumption that the future will resemble the past. They assume that because we’ve completed a certain amount of work in the past, we’ll be able to complete a similar amount of work in the future. But as we’ve seen, software development is a journey into the unknown. Each new feature presents its own unique challenges and complexities.

So, if story points and counting stories are flawed approaches, what’s the alternative? The answer, as we’ll see, lies not in predicting, but in learning.

Learning from Estimates: Embrace Uncertainty with Probabilistic Thinking

New features are uncharted territory, a unique set of challenges and complexities. Trying to predict how long it will take can be a fool’s errand. But that doesn’t mean we can’t learn from the journey.

Instead of trying to predict the unpredictable, we learn from our estimates. This learner’s mindset acknowledges the inherent uncertainty in software development. It recognizes that estimates are not promises, but educated guesses. And like all guesses, they can be wrong. But each wrong guess is an opportunity to learn.

When we estimate a new feature, we’re making assumptions. We’re assuming we understand the feature’s requirements. We’re assuming we know how to implement it. We’re assuming we can foresee any potential obstacles or challenges. But these assumptions are often wrong. And it’s in these moments of surprise, these deviations from our expectations, that we learn the most.

For example, let’s say we estimate a new feature will take two weeks to implement. But during development, we encounter a technical hurdle we didn’t anticipate. It takes us an extra week to overcome this hurdle and complete the feature. This is a learning opportunity. It tells us there was something we didn’t know or understand when we made our estimate. And now that we’ve encountered and overcome this hurdle, we’re better equipped to handle similar challenges in the future.

Learning from estimates also helps us identify gaps in our knowledge. If our estimates are consistently off, it’s a sign that there’s something we don’t understand. Maybe we’re not fully grasping the requirements. Maybe there’s a part of our tech stack we’re not familiar with. Whatever the case, these inaccuracies in our estimates shine a light on what we do and do not know.

How can we capture this uncertainty in our estimates? This is where probabilistic estimation comes in. Instead of giving a single, definitive estimate, provide a range. For example, we might estimate that a feature will take between two and four weeks to implement, with a 70% confidence level. This range reflects the size of the feature, the growth of our understanding as we work on it, and the pace at which we can realistically work. It acknowledges the inherent uncertainty and variability in software development.

That’s how estimation becomes a tool for learning, not just predicting. And it becomes more useful when combined with another tool.

Story Mapping: A Tool for Estimation and Discovery

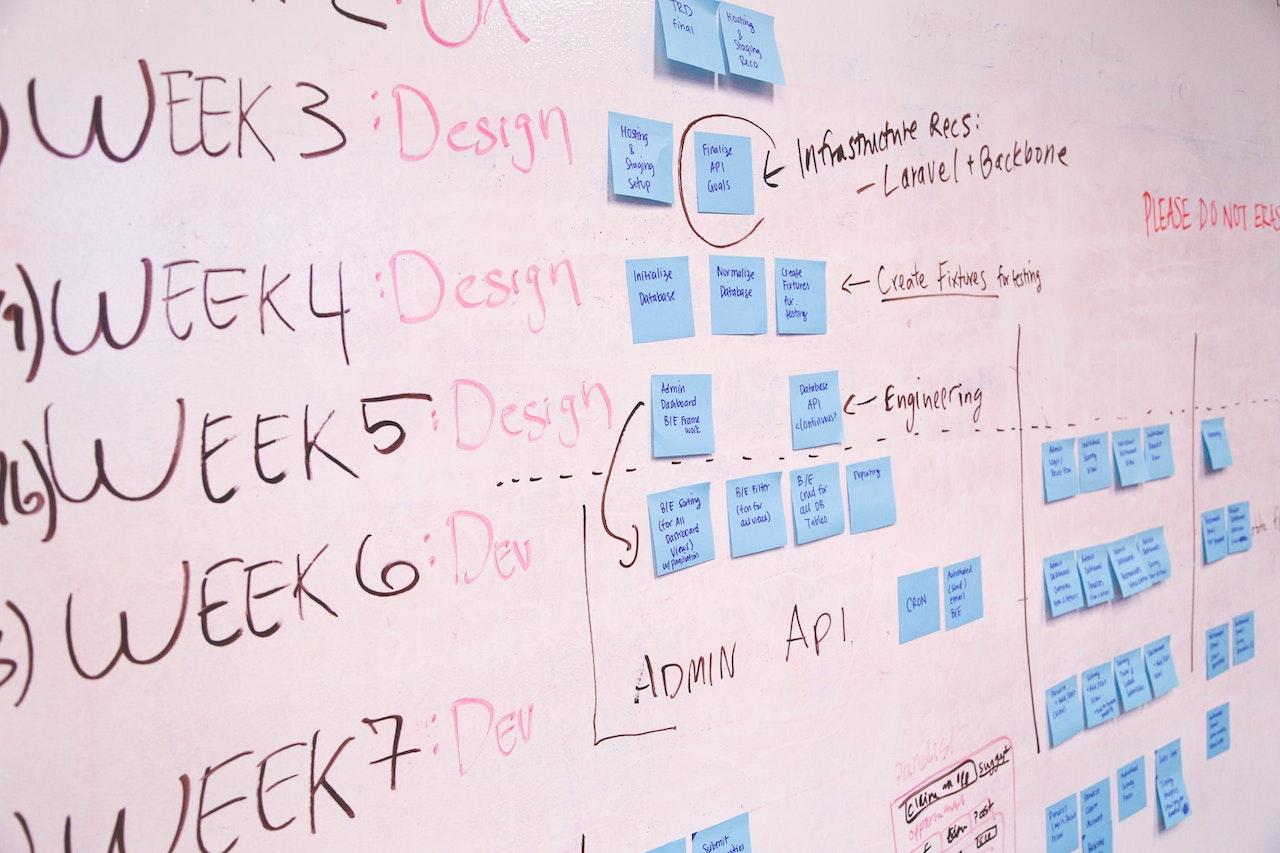

Story mapping is a powerful tool for product teams. It’s a visual exercise that helps teams understand their users’ journeys and the steps they take to achieve their goals. But story mapping isn’t just a tool for understanding user behavior. It’s also a tool for estimation and discovery.

When we create a story map, we’re breaking down a user’s journey into individual steps or activities. Each activity is supported by a piece of functionality that our software provides. By mapping out these activities, we can see the full scope of what we need to build. And this gives us a basis for estimation.

But here’s the key: we’re not just estimating the time it will take to build. We’re also identifying what we do and don’t know. Each story is an assumption. It’s an assumption about what the user needs, how they’ll use the software, and how we’ll implement the functionality. And as we’ve discussed, assumptions can be wrong.

This is where story mapping really shines. As we map out the user’s journey, we might realize there are steps we don’t understand. Maybe there’s a part of the journey we’ve overlooked. Maybe there’s a user need we haven’t considered. These are gaps in our knowledge, which are opportunities for learning.

Story mapping also allows us to explore different user journeys. Maybe there are alternative paths a user could take to achieve their goal. Maybe there are different types of users with different needs. By exploring these possibilities, we can discover new stories and new areas of uncertainty.

Once we’ve identified these gaps and uncertainties, we can flag them for further investigation. We can add them to our product backlog as ‘spikes’ – time-boxed research tasks designed to increase our understanding. This way, we’re not just adding new things to our backlog. We’re adding opportunities for learning and improvement.

As you can see, story mapping is a tool for planning and learning. It helps us estimate more effectively by revealing what we do and do not know. And by focusing on learning, we can continually improve our estimates, our understanding, and ultimately, the outcomes our software delivers for users.

Case Study: Learning from Estimates in Practice

“Consider a real-world example from a software development team working on a B2B backend product. The team was tasked with developing a new feature to process and manage bulk orders more efficiently. A key part of this feature was integration with an external inventory management system.

The team began by creating a story map of the new feature. They broke down the user’s journey into individual stories, each representing a piece of functionality the tool needed to provide. As they mapped out these stories, they realized there were parts of the journey they didn’t fully understand. The integration with the external system was a significant unknown. They had never worked with this system before, and they weren’t sure how complex the integration would be. They flagged this story as a high risk.

Next, the team estimated the time it would take to build each story. They also identified what they did and did not know about each story. They used a simple traffic light system to flag each story by risk: green for low risk (well-understood), yellow for medium risk (some unknowns), and red for high risk (many unknowns).

As they started development, the team kept track of their estimates and actual times. They noticed that their estimates for green stories were fairly accurate, but their estimates for yellow and red stories were often off. This was a clear sign that there was something they didn’t understand about these stories.

Instead of seeing these inaccuracies as failures, the team saw them as learning opportunities. They held regular retrospectives to discuss what they had learned from their estimates. They asked questions like: What made this story harder than we thought? What did we overlook in our initial estimate? What can we do differently next time?

Over time, the team noticed a pattern. The stories that were hardest to estimate were often those that involved new technologies or complex integrations. This insight helped the team improve their estimation process. They started allocating more time for these types of stories and conducting more upfront research to better understand the challenges involved.

By focusing on learning from their estimates, the team was able to continually improve their understanding and their process. They became more effective at estimating, yes, but more importantly, they became better at building software. And that’s the real power of learning from estimates.”

This case study is a perfect example of how the principles we’ve discussed can be applied in practice. By focusing on learning, flagging risks, and using tools like story mapping, you too can improve your estimation process and deliver better software.

Conclusion: From Estimating to Learning

In the world of software development, estimation is often seen as a necessary evil. We’ve all been there: trying to predict how long a new feature will take, only to find that our estimates were way off. But as we’ve seen, the problem isn’t with estimation itself. The problem is with our approach to it.

We’ve been conditioned to view estimation as a way to predict the future. But the future is inherently unpredictable, especially when it comes to building something new. Instead of trying to get better at predicting the future, we should focus on getting better at learning from our estimates.

Here’s how you can start implementing these strategies in your team:

- Shift your mindset: Start viewing estimation as a learning tool, not a prediction tool. Remember, the goal isn’t to get better at estimating—it’s to get better at learning from your estimates.

- Use story mapping: This tool can help you break down a feature into individual stories and identify gaps in your knowledge. It’s a great way to visualize the user journey and highlight areas of uncertainty.

- Flag risks: Use a simple traffic light system to flag each story by risk. This can help you identify what you do and do not know about each story, and where you need to focus your learning efforts.

- Learn from your estimates: Hold regular retrospectives to discuss what you’ve learned from your estimates. Ask questions like: What made this story harder than we thought? What did we overlook in our initial estimate? What can we do differently next time?

- Embrace uncertainty: Remember, it’s okay not to know everything. In fact, acknowledging what you don’t know is the first step towards learning and improving.

By adopting these strategies, you can turn estimation from a source of frustration into a powerful learning tool. So why not give it a try? The future may be unpredictable, but with the right approach, it can also be a source of valuable insights and learning opportunities.